Well, if the mere fact that there exists a functionally comprehensive, 100% Open Source middleware suite isn't remarkable enough, there are plenty of other reasons why IT practitioners should take a good look at WSO2's offerings.

I have had the opportunity for a few months now to work closely with the company's engineers and play with their products, using them to build (demo) distributed systems, and this is what I have found.

There is significant innovation here that is really cool. There may be even more that I haven't discovered yet.

1. OSGi bundles, functionality "features", and the fluid definition of a "product"

(WSO2 has no rigid products, although their brochures list about 12. The truth is that they have hundreds of capability bundles that can be combined with a common core to create tailored products at will.)

2. A "Middleware Anywhere" architecture that spans cloud and terrestrial servers

(When cloud-native features like multi-tenancy and elasticity are baked into the common core of the middleware product suite, there is no need for applications to be written differently for cloud and terrestrial deployments.)

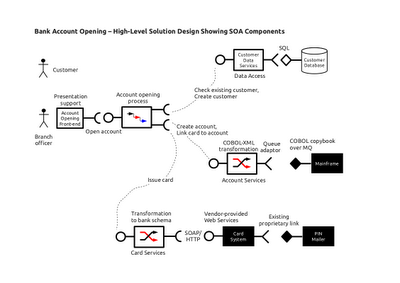

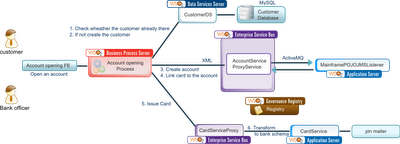

3. Not just an ESB - the right tool for every job

(As in my writings on "Practical SOA for the Solution Architect", there are three core technology components required for SOA - the Service Container, the Broker and the Process Coordinator. They do different things and are not mutual substitutes. There are also eight supporting aspects at the technology layer. The ESB, being just the Broker component, cannot do all of these functions. WSO2 has products corresponding to all of them.)

4. Making a federated ESB an economically viable architecture

(Economics forces many organisations to deploy their expensive ESB product in a centralised, hub-and-spokes architecture, which leads to a single point of failure and a performance bottleneck. But Brokers are best deployed in a federated manner close to service provider and service consumer endpoints. WSO2's affordable pricing model makes it easy for organisations to do the right thing, architecturally speaking.)

5. The "Server Role" concept - enabling a logical treatment of SOA topology

(Architects don't think in terms of products but in terms of functional capability. One would rather earmark an artifact for deployment to a "mainframe proxy" and another to a "customer complaints process node" than to an "ESB" or a "Business Process Server" instance. Tagging both servers and artifacts with a user-defined "Server Role" makes it possible to speak in these convenient abstractions.)

6. The CAR file as a version snapshot of a working and tested distributed system

(In distributed systems, upgrading the version of software X can break its interoperability with software Y. That's why version change in distributed systems is such a nightmare involving expensive regression testing and a higher probability of outage after an upgrade. But what if a related set of changes can be tested together, certified as interoperable, labelled as a single version, and deployed to multiple systems through a common mechanism? We've just described the Carbon Archive.)

7. The CAR file and Carbon Studio as a unifier of diverse developer skillsets

(Developing distributed systems is challenging from a skills perspective. Writing business logic in Java and exposing it as a web service requires different skills from writing data transformation in XSLT, which is again different from specifying process logic in WS-BPEL. And then there are specialised languages to codify business rules, etc. WSO2 has a single IDE to support all these diverse developer needs - Carbon Studio. It also provides a single package that can hold all these types of artifacts - the Carbon Archive.)

8. The Admin console and support for configuration over coding

(Not every artifact needs to be "developed" through code. Every WSO2 server product has an Admin console that looks largely alike, yet tailored to the peculiarities of the artifacts deployed on that server. Following an 80-20 rule, the bulk of the (simple) artifacts that need to be deployed on a server can be created through configuration using the admin console.)

9. Port offsets and the ability to run multiple servers on the same machine

(Owing to their common core, all WSO2 server products listen on the same ports - 9763 (HTTP) and 9443 (HTTPS). Obviously, port conflicts will result when attempting to run two servers (or even two instances of the same server product) on a single machine. But with a simple configuration change (a "port offset"), a server can be nudged away from its default port to a non-conflicting one. A port offset of 1 will have a server listening on ports 9764 (HTTP) and 9444 (HTTPS), for example.)

10. The dark horse - Mashup Server and server-side JavaScript

(Nodejs has refocused industry attention on server-side JavaScript and the power that brings. But Nodejs has no support for E4X! If you're doing XML manipulation of data from multiple sources, which is what mashups often entail, WSO2's Mashup Server is worth a serious look.)

11. Carbon Studio - A light-touch IDE for middleware developers

(The best GUIs are those built on top of non-visual scripting. Every artifact used by the WSO2 development process is text-based, including the build process that relies on Maven. Command-line junkies can coexist peacefully with those who prefer a GUI, because the IDE imposes no additional requirements on developers. It's purely an option, not an obligation. You can even use another IDE like IntelliJ's IDEA with no loss of capability.)

12. Governance Registry - for control both mundane and subtle

(Governance or plain management? People often use the former term when they mean the latter. In any case, for the mechanics of what you want to hold to support either of these functions, the WSO2 registry and repository tool is simple, flexible and powerful enough to support you. The registry is embedded inside every server product as well as being a standalone server, so the way one can choose to store and share configuration settings as well as policy files is pleasurably versatile.)

If you work with middleware, you should be seriously checking out the offerings of WSO2.